This blog explores its limitations and introduces research that offers solutions, such as composable diffusion models and improved spatial awareness, to enhance its performance with detailed descriptions.

In recent years, Stable Diffusion has emerged as one of the most prominent models for text-to-image generation. Its ability to generate highly detailed, photorealistic images from textual descriptions has made it a popular tool for digital artists, designers, and researchers. However, despite its successes, Stable Diffusion—like many text-to-image models—has its limitations, particularly when faced with complex prompts that involve multiple objects, intricate relationships, or abstract concepts.

This blog post explores how Stable Diffusion struggles to handle complex prompts, providing a detailed critique of these challenges. We will then introduce cutting-edge research that addresses these issues, offering solutions to improve the model’s performance with complicated and detailed inputs.

The Challenge: Handling Complex Prompts

Stable Diffusion’s main appeal lies in its ability to convert text into visually striking images. However, this capability often breaks down when the text prompt becomes too complicated. Complex prompts typically involve multiple components, such as describing various objects, specifying spatial relationships between those objects, or introducing abstract concepts that are difficult to visually interpret.

Some examples of complex prompts that might confuse Stable Diffusion include:

- “A dog and a cat curled up together on a couch”

- "A yellow bowl and blue cat"

- "A red bench and a yellow clock"

- "One cat and two dogs"

Error cases of stable diffusion from complicated prompts

These prompts contain multiple subjects, actions, and relationships that must be simultaneously represented in a coherent image. In many cases, Stable Diffusion may generate an image that captures certain parts of the description well (e.g., flying cars or skyscrapers), but it often fails to connect the elements logically. The result can be fragmented or lack cohesion.

Where Stable Diffusion Falls Short

Several key challenges arise when Stable Diffusion encounters complex prompts:

- Inability to Maintain Object Consistency Stable Diffusion may struggle with maintaining object consistency when dealing with multiple objects described in a prompt. For example, in a prompt about several people interacting, the model might confuse the position or features of each person, resulting in an image where the relationships between subjects are incorrect or unclear.

- Difficulty Interpreting Spatial Relationships Complex prompts often require the model to place objects in specific spatial relationships (e.g., “a cat sitting on a chair next to a vase on the table”). Stable Diffusion frequently misinterprets these relationships, placing objects in illogical positions or even overlapping them incorrectly. This results in images that fail to represent the intended scene.

- Struggles with Abstract or Surreal Concepts When given prompts that involve abstract or surreal ideas (e.g., “a river flowing uphill” or “a dragon made of clouds”), Stable Diffusion may struggle to generate visually coherent representations. This is because the model’s training data primarily consists of real-world images, making it difficult for the model to create images based on abstract or novel concepts that are not grounded in reality.

- Poor Understanding of Object Interactions Prompts that describe specific interactions between objects or characters (e.g., “a person pouring coffee into a cup while reading a book”) pose another challenge. Stable Diffusion may generate each object correctly but fail to depict the interaction itself, such as misplacing the coffee stream or showing the book in an unnatural position.

Why Do These Problems Occur?

The root cause of these challenges lies in how diffusion models, like Stable Diffusion, are trained. These models learn to generate images from noisy inputs by progressively denoising them. However, when the input prompt becomes complex, the model’s ability to balance multiple features and objects becomes impaired. Here’s why:

- Training Data Limitations Text-to-image models like Stable Diffusion are trained on large datasets containing images and corresponding textual descriptions. However, these datasets typically emphasize simpler, real-world scenes with fewer objects and clearer relationships. As a result, the model’s ability to understand more complicated or imaginative prompts is limited by the scope of its training data.

- Ambiguity in Language Natural language is often ambiguous, and complex prompts may have multiple possible interpretations. For example, the phrase “a man standing next to a car” could be interpreted in different ways depending on the specific context. Stable Diffusion’s inability to handle these ambiguities can lead to images that are inconsistent or illogical.

- Limited Attention Mechanisms Stable Diffusion uses attention mechanisms to focus on different parts of the input prompt while generating the image. However, as the complexity of the prompt increases, these attention mechanisms may become overwhelmed, leading the model to focus on some elements at the expense of others. This can result in images that are incomplete or fail to represent all parts of the prompt equally.

Research Solutions to the Complex Prompt Problem

Fortunately, recent research has focused on addressing the limitations of diffusion models like Stable Diffusion when dealing with complex prompts. Several key studies have proposed innovative solutions to enhance the model’s ability to interpret and generate images from complicated descriptions.

1. Composable Diffusion Models: Addressing Object Composition

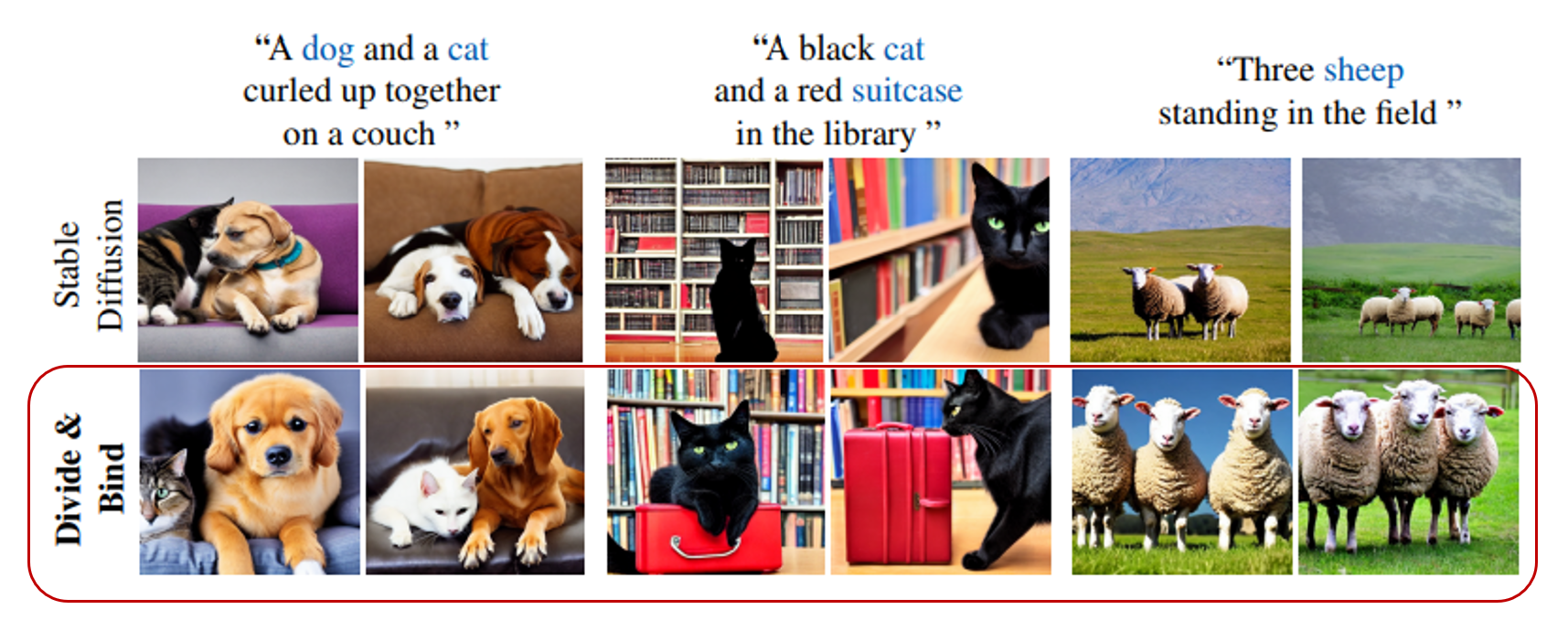

A key approach to addressing Stable Diffusion’s struggles with complex prompts is the development of composable diffusion models. The research outlined in DIVIDE & BIND introduces a method for improving the composition of objects in generated images by enabling the model to process different components of the input separately.

How It Works: In composable diffusion, the model generates images by processing different parts of the prompt individually before combining them into a coherent whole. For example, when given a prompt describing multiple objects (e.g., “a cat sitting on a table next to a vase”), the model generates an image of the cat, the vase, and the table separately. These components are then combined using a shared context, ensuring that the relationships between objects are represented correctly.

Results: This approach significantly improves the model’s ability to handle complex prompts. By breaking down the input into smaller, more manageable parts, composable diffusion enables the model to maintain consistency between objects and accurately represent spatial relationships.

2. Latent Diffusion with Enhanced Spatial Awareness

Another promising line of research, presented in "Expressive text-to-image generation with rich text.", proposes enhancing the spatial awareness of latent diffusion models like Stable Diffusion. This approach aims to improve the model’s ability to interpret and generate complex scenes by explicitly encoding spatial relationships during the training process.

How It Works: The researchers introduce a spatial conditioning mechanism that allows the model to understand the layout of the scene described in the prompt. By encoding information about the relative positions of objects in the scene, the model is better equipped to generate images that accurately reflect the specified spatial relationships.

Results: This approach has been shown to improve the accuracy of spatial relationships in generated images. For example, when given a prompt like “a dog sitting under a tree,” the model is more likely to place the dog in a realistic position relative to the tree, rather than floating in mid-air or overlapping with other elements in the scene.

3. Improving Attention Mechanisms: Multi-Scale Attention

Research presented in Attend-and-Exciteoffer focuses on improving the attention mechanisms used in diffusion models. The researchers propose a multi-scale attention framework that allows the model to focus on different parts of the input prompt at varying levels of detail.

How It Works: In the multi-scale attention framework, the model processes the input prompt at different scales, allowing it to focus on both the overall structure of the scene and the finer details of individual objects. This enables the model to balance the complexity of the prompt more effectively, ensuring that all parts of the input are represented in the final image.

Results: This approach improves the model’s ability to generate images from complex prompts by ensuring that both large-scale and small-scale features are accurately represented. For example, in a prompt describing a busy street scene with multiple people and vehicles, the model is able to generate both the overall layout of the scene and the detailed features of each individual object.

Conclusion: Overcoming the Limitations of Stable Diffusion in Complex Prompt Handling

Stable Diffusion, while a powerful and flexible tool for generating images from text, faces significant challenges when dealing with complex prompts. These challenges include inconsistencies in object representation, difficulty interpreting spatial relationships, struggles with abstract concepts, and poor handling of interactions between objects. These limitations often lead to images that fail to accurately represent the intricacies and subtleties described in complex prompts.

However, recent research is actively addressing these shortcomings. The development of composable diffusion models, enhanced spatial awareness, and multi-scale attention mechanisms offer promising solutions to these challenges. By breaking down prompts into manageable components, improving spatial understanding, and refining how models focus on different parts of an image, these advancements are setting the stage for more accurate and sophisticated text-to-image generation.

As these innovations are integrated into future iterations of Stable Diffusion and other diffusion models, the ability to handle complex prompts will improve dramatically. Users will be able to generate more detailed, coherent, and contextually accurate images from even the most intricate and abstract descriptions. While the current limitations of Stable Diffusion may hinder its performance in some areas, the ongoing research efforts provide a clear path toward overcoming these obstacles, making AI-powered image generation even more powerful and accessible in the future.